In this blog series, I am sharing the project work that I did to implement a simple palm or hand posture detection using OpenCV & CNN (Convolutional Neural network). This project is a proof of concept to show how easily hand postures can be used to interact with computers.

Why posture detection is so important ?

Posture detection or recognition is a challenging topic and it has wide usage in various scenarios. For instance, health care, security or monitoring suspicious activity, human-computer interaction, etc. And because of this, it’s also a very attractive problem to be addressed in an innovative and efficient manner.

Broadly, there are two approaches to tackle Posture or Gesture Recognition problem:

- Sensors based

- Computer Vision based

Using computer vision, complemented with a very simple neural network, I trained the model to recognize different hand postures to define different actions. A Deep Learning approach.

What is Deep Learning ?

So just in case, you have not realized yet, but Deep learning is a buzzword that is hard to ignore. It’s used in autonomous or driverless cars. Specially modified cars (equipped with various sensors, cameras, and radar-based technologies) are trained or self learn to drive on the road. Similarly for autonomous robots, drones, etc.

In addition, there are other implementations of Deep learnings as well. For instance, in natural language processing (eg: used by virtual assistants like Siri, Alexa, etc), image classifications (eg: used in Google search engine), the medical industry (eg: identify certain diseases).

Basically, Deep Learning is the approach of making computing devices intelligent enough to do certain tasks on par or more efficiently than human beings. This can be achieved by various methods. I think deep learning gained more hype when various Neural Networks implementations (CNN, RNN) became feasible enough to be utilized for training i.e. due to a huge leap in computational power of CPU/GPU and ease of Neural Network implementation through different programming languages, especially Python & Ruby.

Anyways, enough of these theoretical talks. Like any technology enthusiast, I am also intrigued by deep learning. And I wanted to learn more about it. So I am sharing here one of my fun hobby projects that I implemented to better understand it.

As the title says, I have implemented — Hand posture recognition using the python computer vision library OpenCV and Keras library for Convolutional Neural Network.

Project components:

For this project, I used the following:

- Python implementation

- OpenCV 2 for computer vision

- Keras neural network API

- Theano & Tensorflow backend library.

- Basic laptop camera for real-time video/image input

- Onboard CPU. No GPU required at least for this simplistic project implementation.

Trained Hand Postures :

In this implementation I have trained the model to recognise 4 hand postures:

- Ok

- Peace

- Punch

- Stop

The graph window visualises the posture recognition probability, in other words accuracy.

Observations:

As, you might have noticed in that video, it’s able to recognise:

- The Peace & Punch postures with maximum accuracy.

- While Stop posture with medium probability

- And Ok posture with the worst accuracy

There could be multiple reasons for this inaccuracy:

- Firstly, surrounding light can affect the camera output.

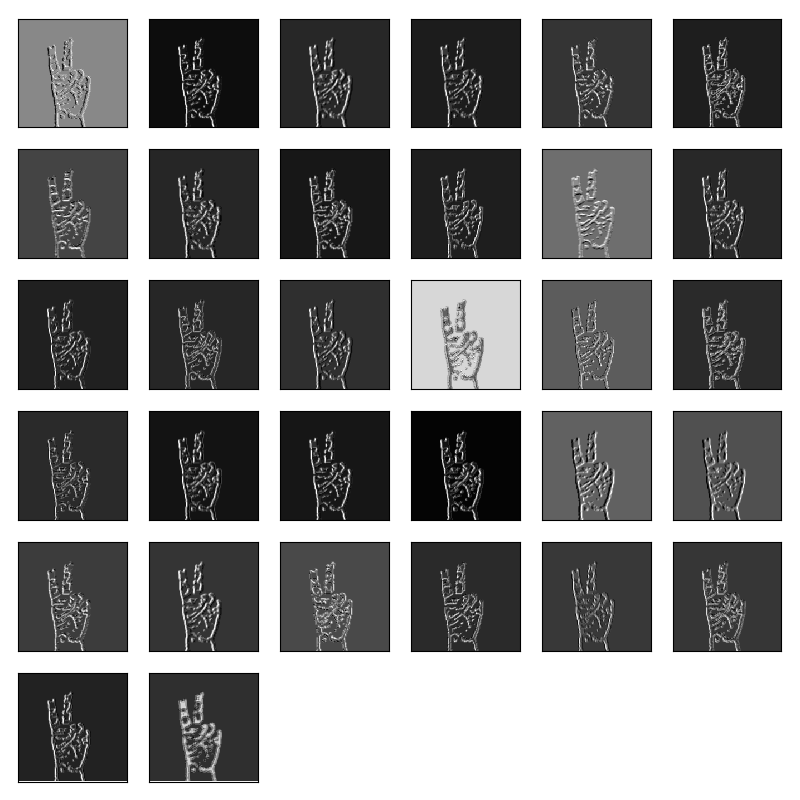

- Secondly, lower number of sample images. I used 200 images per gesture. So 200 x 4 = 800 images only.

- Lastly, lower training duration. I trained for around 11–12 epochs (its a neural network term for pass-through of training set<images> through the network)

In conclusion, I don’t want to make this blog a long and boring one. Therefore, I will be doing a deep dive into my implementation, in my next post.

Hope this helps and if you like it then please do like, share it or leave a comment 🙂

Take care!

Simply want to say your article is as astonishing. The clearness in your post is just great and i can assume you’re an expert on this subject.

Well with your permission let me to grab your RSS

feed to keep up to date with forthcoming post. Thanks a million and please keep up the enjoyable

work.